VOXReality deployed the pretrained models in three use cases covering sectors that have been highly affected by COVID-19.

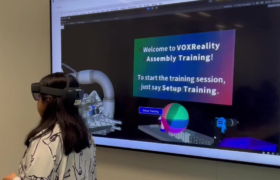

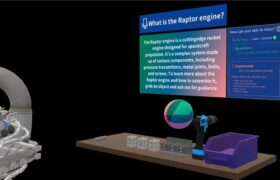

DIGITAL AGENT

The Training Assistant provides voice-activated guidance for complex assembly tasks by blending audio-visual contexts for spatially and semantically grounded interaction.

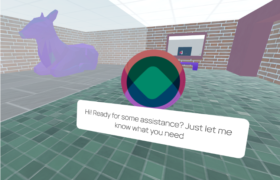

VIRTUAL CONFERENCING

THEATRE

The AR Theatre elevates live performance by delivering personalized, translated captions and AR Visual Effects (VFX) directly to the audience via XR headsets.

AR glasses and visual effects tested the technologies developed in VOXReality merging them with stage action, sound, and digital scenography into one immersive storytelling experience.

Hippolytus (in the Arms of Aphrodite) aimed to explore intimacy and presence in XR theatre: two actors performed for two spectators wearing Magic Leap AR headsets, dissolving the distance between the audience and the stage, the physical and digital dimensions. Translations are pre-generated offline and human-proofread to ensure high literary quality and reduce live latency. The system’s central control server orchestrates the experience, triggering VFX based on both verbal cues (caption matches) and visual cues (VL detection of stage events like actor entrances/exits), using a determined VFX plan.