Master’s Student: Gabriele Princiotta

Thesis Advisors (TUM): Dr. Sandro Weber, Prof. Dr. David Plecher

Thesis Advisor (VOXReality): Leesa Joyce

A Master’s thesis titled “Exploring User Interaction Modalities for Open-Ended Learning in XR Training Scenarios” by Gabriele Princiotta was recently defended at the Technische Universität München (TUM). The thesis was co-advised by Dr. Sandro Weber and Prof. Dr. David Plecher from TUM, and Leesa Joyce from the VOXReality consortium.

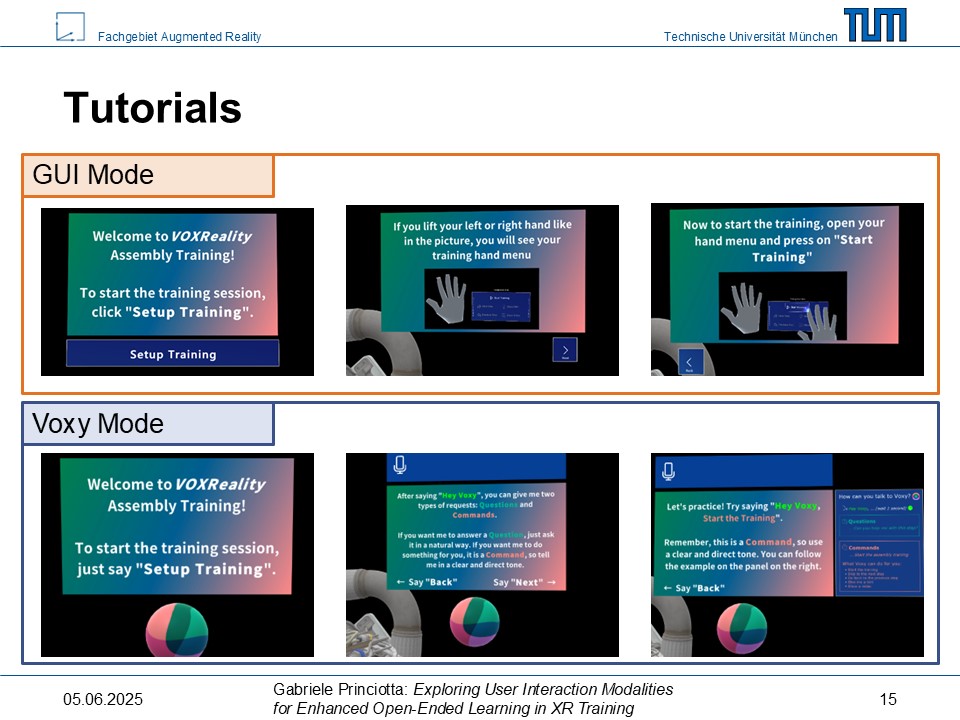

This study explores how different interaction modalities affect user experience in open-ended training environments using XR. Specifically, the research focused on an AR assembly training application developed for the Microsoft HoloLens 2. Two interaction methods were designed and compared: a traditional hand-based Graphical User Interface (GUI Mode) and an AI-powered voice interaction mode (Voxy Mode), supported by a LLM and Automatic Speech Recognition.

The user study employed a within-subjects design to evaluate the impact of these modalities on user experience, cognitive load, usability, and task engagement. While quantitative findings showed significantly faster task completion times in GUI Mode—primarily due to shorter onboarding and user familiarity—no statistically significant differences emerged across other user experience metrics. This outcome was influenced by a strong learning effect throughout the study sessions.

However, qualitative feedback indicated a clear user preference for the Voxy Mode. Participants highlighted the engaging, supportive nature of interacting with the conversational AI assistant (named ARTA), noting how it made the training feel more natural and less mechanical. At the same time, the limitations of current ASR accuracy and the assistant’s understanding of nuanced or ambiguous user input were seen as key areas for future development.

The VOXReality partners played an essential role in enabling this research. They provided the AI voice assistant model, customized it for integration into the HoloLens application, and supported the technical setup needed for the experiment. A general assembly test provided by the consortium was used as the basis for the training scenario in the user study.

The results highlight the potential of multimodal, voice-driven interfaces in XR training environments to improve engagement and perceived support—particularly in open-ended learning tasks. At the same time, the thesis underscores the practical limitations tied to current speech recognition capabilities and the need for more sophisticated user intent recognition and contextual awareness from AI assistants in XR.

Finally, the study also draws attention to the methodological challenges posed by learning effects in within-subject comparative studies of interface designs. As XR training applications become increasingly personalized and adaptive, future research should focus on enhancing the intelligence and robustness of voice interfaces and minimizing study bias to ensure reliable UX evaluation.

Gabriele Princiotta

Unity XR Developer @ Hololight